How to make web scraping if you are not a programmer? You should use an API. Here, we recommend three alternatives.

Every firm benefits from the ongoing advancement of this technology. Certainly, corporations may use web scraping tools to collect crucial data from any website or consumer they are interested in.

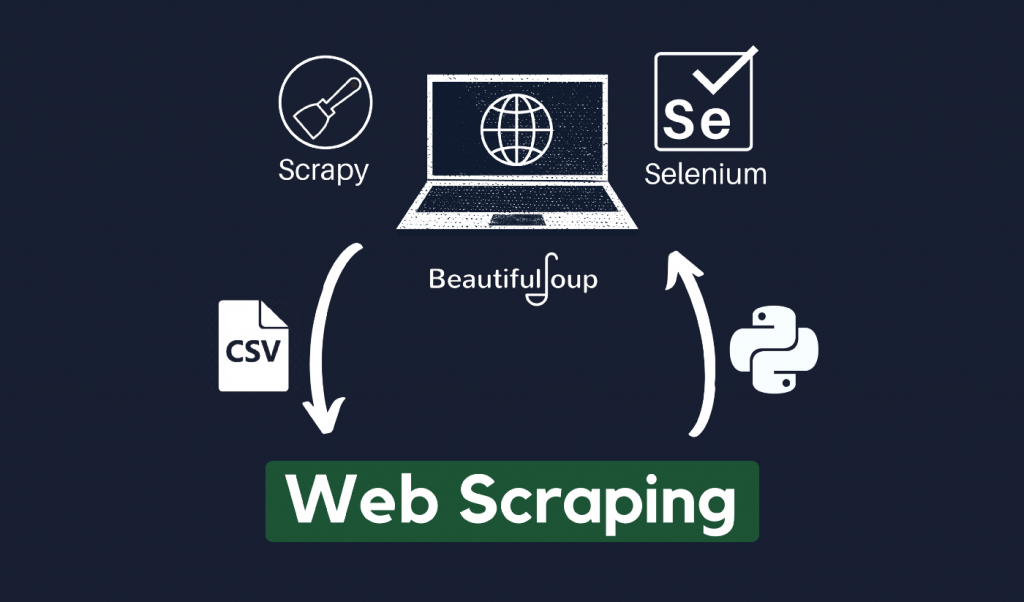

To begin, web scraping is a means of collecting structured online data in an automated fashion. Some programmers copy and paste information from a webpage; this is similar to what a web scraper does, but on a smaller, more manual scale.

Web scraping, as opposed to the time-consuming practice of manually gathering data, automates the collection of millions of data points from the internet’s seemingly unlimited extent. If you are not a coder, or if you are, but want to make this process easier, you may use a scraping web API. You only need the URL, and the program will do the rest.

Furthermore, because automating and streamlining operations is vital, most modern firms employ an API for this reason.

What Is An API?

An API is a programming interface that enables organizations to expand the capabilities of their systems to third-party developers, trade partners, and internal departments. Thanks to a defined interface, services and products may interact with one another and profit from one another’s data and capabilities.

Moreover, because automating and streamlining operations is vital, most modern firms employ an API for this reason. The market certainly has a plethora of APIs to offer you, but they do not all function in the same manner.

Codery

Codery enables you to collect data from scraped web pages. Choose the necessary web pieces and create a complete data structure in the most flexible manner possible. Furthermore, it is quick and easy. The Codery API is the finest and fastest web crawling tool accessible on the internet.

It features trustworthy and dependable proxies. There are hundreds of millions of proxies accessible. Get the information you need without worrying about being blocked.

Page2API

Page2API is a robust API that provides a wide range of services and capabilities. To begin, scrape web pages and transform the HTML into a well-organized JSON format. Furthermore, you may execute long-running scraping sessions in the background and retrieve the results through a webhook (callback URL).

Page2API provides a custom scenario in which you may create a series of instructions to wait for certain elements, run javascript, manage pagination, and much more. They provide the option of using Premium (Residential) Proxies, which are located in 138 countries around the world, for difficult-to-scrape websites.

Apify

This allows for the scraping of even the most popular websites. Schedule your jobs with a cron-like service and save large amounts of data in dedicated storage. Apify services may be written in JavaScript, Python, or any other language that can be packaged as Docker containers.

It connects many web services and APIs and allows data to flow across them. Custom computation and data processing procedures should be added. Webhooks, directly from your code, or integration tools like Zapier or Keboola may be used to activate external services.